Introduction

By now everybody has heard of LLMs and understands (to some degree) what they do. Given that training LLM’s is so expensive, organisations are seeking to run them effectively without incurring the high costs. So, how does an organisation gain a competitive advantage without blowing the budget? One option is to employ the use of Retrieval-Augmented Generation (RAG).RAG allows you to augment the capabilities of your LLM with your own data. By removing the biggest constraint with LLMs, which is the model training/training data, organisations are now able to improve the results provided by an LLM by leveraging their own data. With RAG, your data can become your differentiator.I will go through a simple analogy to explain how RAG works; The purpose of this analogy is to help explain RAG to a broader audience in hopes of breaking down the barriers of expertise.

RAG Analogy Before we go into the explanation of RAG, I want you to read this definition that I have taken from the Oracle website.

“RAG provides a way to optimize the output of an LLM with targeted information without modifying the underlying model itself; that targeted information can be more up-to-date than the LLM as well as specific to a particular organization and industry. That means the generative AI system can provide more contextually appropriate answers to prompts as well as base those answers on extremely current data.” – Oracle

The explanation above to most people could be seen as too complicated for them to understand. That is why I will be providing a much easier and more digestible explanation of RAG. Once you have gone through the analogy, I want you to revisit the explanation above and see if your understanding of this definition has improved, but most importantly, LEARN WHAT RAG ACTUALLY IS!

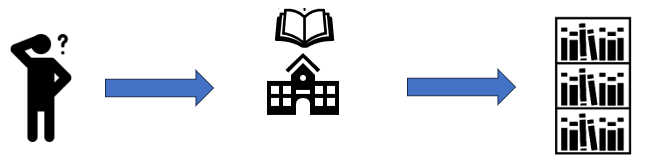

First, let’s imagine you have a question e.g. What tools do I need to build a wooden 3-legged coffee table? This question is specific, and it may not be easy to find a direct answer to it. Let’s first go into the traditional approach when raising this same question before the internet existed.

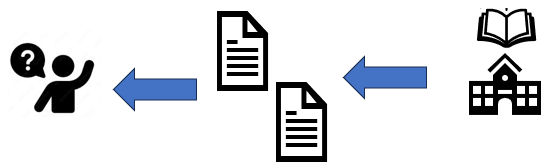

So, you have your question “What tools do I need to build a wooden 3-legged coffee table?”, now what you would do is go into a library to gather the information you may require. Imagine now that you walk into this library to begin your search.

Now you are in your library and have become daunted by the number of bookshelves that will have to be searched to find the relevant information needed. You will have to scavenge through many different books about tools, wooden tables, and construction instructions, this raises the first issue, time wastage. The next issue is that you don’t want to have to go through several books or even through an entire single book e.g. Go through the entire tool itinerary that is used for wooden tables. What you are really looking for is just the pages from books that have just the tools and instructions that you need.

Now let’s look at this same approach when considering RAG. We are looking to reduce the amount of redundancy involved in looking for relevant information. But most importantly, we are looking to only get relevant information within an instant.

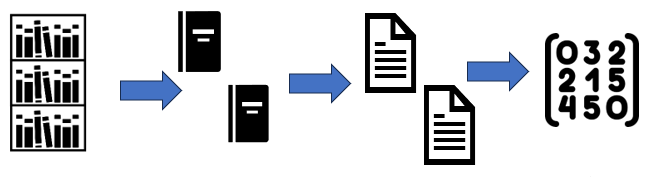

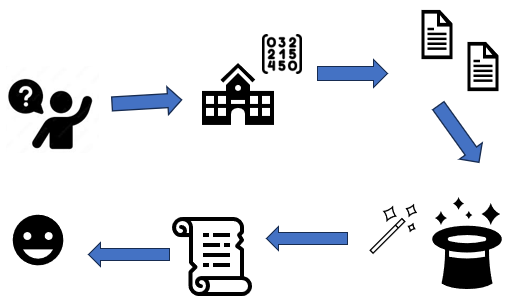

The first thing RAG does is take all the books in the library and break them down into pages that we call “chunks”. These chunks are then converted into a numeric representation called vectors using a tool called an embedder. All these vectors are collected and stored in what is called a vector database, essentially a collection of vectors.

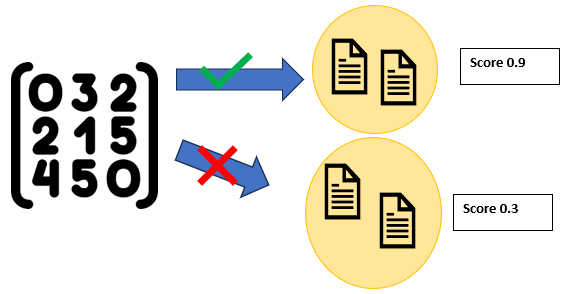

The vector database stores pages as vectors that can be semantically searched, providing responses that are based on the meaning behind the text and not just the text itself. It calculates the similarity of how closely correlated the user’s query is to a page and produces a score. The pages with the highest scores will then be retrieved back to the user.

It is different from the search strategies used by Amazon, or Google, which use keyword searches. Keyword searches do not have the same in-context accuracy as semantic searches as one looks for matching words while the other looks at the meaning behind the text.

Now, going back to the library, we have stripped away all the books and bookshelves and replaced them with a database containing lots of vectors. Now what happens is that YOU don’t go into the library to find the relevant pages i.e., the pages for “tools needed to build a wooden 3-legged table”, the library that holds your vector database will find these pages for you. Additionally, the library will get only the pages that correlate most to your question, for example, it will not return pages about tools for building chairs or about 6-legged tables.

Now that we have all the pages that we need to build our table, another issue arises. Imagine the library returns 100 pages about your specific use case, that is still a lot of information to go through. Now the matter is about how to get all this information in a concise manner. This is where the LLM comes into play and understanding that RAG is used in addition to an LLM not as an approach to retrain it on specific data.

Looking at the broader picture of what happens, we ask our question about building a wooden table, the library searches for these pages in the vector database, and it then outputs the pages relating to our query. What happens next is that we feed these pages into our LLM, however, there are multiple ways the LLM can generates its response, to help tailor the response a concept called prompt engineering is used. Prompt engineering is where we set some guidelines for the LLM to tailor its response, for example, we specify a prompt along the lines of “You are an experienced carpenter who advises on instructions in building furniture … etc”. The LLM will generate a response based on the prompt provided and the pages retrieved, in turn producing a concise and coherent response back to the user, suddenly making the LLM an expert in carpentry.

Conclusion

We have understood that RAG looks up knowledge in its external knowledge base and can be seen as extending the LLM’s knowledge without having to retrain it on new data.

However, there are limitations around this. Having quality content within the knowledge base will not only enable RAG to pass the appropriate context, but also allow the LLM to better interpret these pieces of text. Asking questions outside the knowledge base will cause the LLM to hallucinate as there will be no or low correlating pages relating to the user’s query. Poor quality or incorrect information in the external knowledge base will lead to inaccuracies or poor responses.

A major benefit of RAG is that the information source is visible to the user; helping to find any inaccuracies or potential biases coming from the response generated. In addition to this, the response generated can be verified on its credibility leading to a more trustworthy solution. The flexibility that it offers in applying changes to the external knowledge base will allow the overall solution to be adapted to any use-case.

Keeping the knowledge base up-to-date and ensuring good quality data is kept will ensure that the LLM will always produce ideal responses. With this we can fill in the biggest void with LLMs which is insufficient or outdated knowledge, thus the true power behind RAG is the data behind it.

0 Comments