This is the first in a series of Datalytyx blog posts on useful diagnostic queries you can run in Snowflake. To get us started, we’re going to look at ‘spilling,’ which according to Snowflake’s own definition is “When Snowflake cannot fit an operation in memory, it starts spilling data first to disk, and then to remote storage. These operations are slower than memory access and can slow down query execution a lot.”

TL;DR this query will find and analyse spilling queries but read on to learn more!

Snowflake have a couple of useful articles on how to recognise and deal with query spilling here and here, but to summarise:

- When executing a query, Snowflake loads data into RAM on the nodes that make up the selected warehouse

- The query is run in-memory, but if there isn’t enough RAM it will start to page to the SSDs on the warehouse

- If there still isn’t enough RAM + local storage, the warehouse will start to page to remote storage i.e., S3 or Azure Blob or GCP Cloud Storage

Local SSDs are fast but they’re slower than RAM, so a query that pages to local disk will run slower. Remote disk is much, much slower so a query that pages there will be extremely slow. Queries that spill over to local or remote disk will still complete (subject to query timeouts etc.) and if only a very small proportion of queries spill then maybe that’s fine.

There are two main implications though:

- Queries run slowly. Those slow queries might break something (a data pipeline, a user’s patience)

- The warehouse runs for longer, so the cost is higher

To ‘fix’ a spilling query there are two main options:

- Rewrite the query or the process that calls the query to be more efficient or to process data in batches

- Increase the warehouse size so there’s more RAM and SSD available

- The cost implication re: warehouse size is worth examining further: if a query runs on a S (small) warehouse in 2 mins but spills on a XS (x-small) warehouse and takes 5 mins – we could have saved money by using a larger warehouse!

| Warehouse size | Query A: a normal non-spilling query | Query B: an unusual spilling query |

|---|---|---|

| XS (1 credit / hour) | 3 mins -> .05 credits | 5 mins -> .08 credits |

| S (2 credits / hour) | 1.5 mins -> .05 credits | 2 mins -> .06 credits |

Query A scales linearly – we can run it on a bigger warehouse, and it finishes in half the time, but spends the same credits. However, when Query B is run on a larger warehouse, it gets the same benefit of extra resources but is sped up even further by reducing the need to write to disk. We can see that running on a larger warehouse is faster AND costs less.

In summary, queries that spill to disk are quite rare but can be disproportionally problematic. The simplest solution is often to ignore them, or to increase the size of the warehouse – but of course the best way to fix them is to optimise the queries themselves, but that’s something we should always be doing anyway and isn’t a quick fix.

To find these queries, you can look in the Snowflake web UI as in Snowflake’s links above, but you might want to report on spilling queries programmatically or include a tile on a Power BI dashboard like many of our clients. To do this, we use a query like https://github.com/datalytyx/snowflake-audit/blob/main/spilled_queries.sql

In this query, the CTE finds any queries with data spilled to disk or to remote disk, then in the main select we summarise the results. The filter on TOTAL_ELAPSED_TIME speeds up the analysis – it’s extremely unlikely that a query running for less than a second overwhelmed a warehouse so we can filter those rows out early on to improve performance.

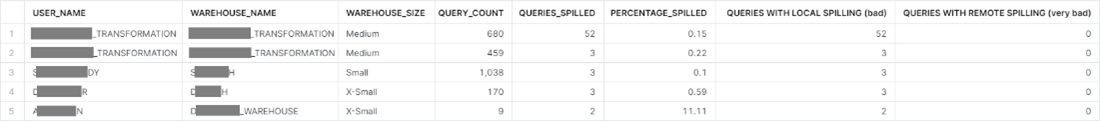

In my case, the results look like:

I can see that I don’t have any queries that spill to remote disk, which is good, but a few that spill to local disk. Most users only have a few spilling queries and those look like interactive work which is probably fine, but there’s a large number coming from a particular data pipeline process, and I can fix it by increasing the size of that warehouse.

If you’ve been using Snowflake for a while, try running the query above to see if you have any queries that spill. If you do, you can try some of the steps above to optimise your Snowflake execution, or of course you can contact us. We include this analysis in our Snowflake Audit Service where we have optimized by consumption of credits by up to 70% – resulting in more bang per credit. We work on projects like this all the time and can help you to find and fix issues like this in your data environment.

This blog is written by Dan Martyr, Datalytyx Solutions Architect.

0 Comments